Welcome back, Insider. Your LLM claims it can handle a million tokens. A new study says it can barely use 70,000 before performance collapses to a coin flip.

Today: the long-context myth exposed, Alibaba's open-weight challenger, and vibe-coding's $100M breakout.

🔒 The edge your competition doesn't have (yet)

Claude Co-Work can change the way you work. In 10 minutes you'll know exactly how. Not for sale — only unlocked by referring 1 person to AI Edge.

The Essentials

1. Alibaba Drops Qwen 3.5 — Open-Weight, Agent-First, and Gunning for GPT-5: Alibaba's Qwen team released Qwen3.5-397B, a sparse MoE model packing 397B total parameters but activating only 17B at inference. The result: 8.6x–19x faster decoding than dense equivalents at 60% lower cost. The model ships with a 1M token context window, native multimodal capabilities via early fusion, and a fully open Apache 2.0 license. Alibaba claims it outperforms GPT-5.2, Claude Opus 4.5, and Gemini 3 Pro on 80% of benchmarks — including 91.3 on AIME26 and 83.6 on LiveCodeBench v6. If independent tests hold up, this is the strongest open-weight model available today.

2. OpenClaw's Creator Joins OpenAI — But the Security Mess Stays Behind: Peter Steinberger, creator of OpenClaw — the open-source AI agent with 196K GitHub stars — is joining OpenAI. Sam Altman called him "a genius with amazing ideas about very smart agents." OpenClaw will continue as an open-source project with OpenAI's backing. The timing is notable: Bitdefender recently found nearly 900 malicious skills on OpenClaw's ClawHub registry — roughly 20% of all packages — plus a critical RCE vulnerability and 135K+ exposed instances. OpenAI is acquiring talent, but also inheriting a serious security problem.

3. Emergent Hits $100M ARR in Eight Months — Vibe-Coding's Fastest Ramp Ever: India-based Emergent crossed $100M in annual recurring revenue just eight months after launch, doubling from $50M in a single month. The vibe-coding platform now has 6M+ users across 190 countries, with 70% having zero coding experience. Over 80% of new projects are mobile apps. Emergent raised a $70M Series B in January at a $300M valuation — SoftBank's first new India bet in four years. The no-code wave isn't slowing down.

The Headline

Your LLM's Million-Token Context Is a Marketing Number

The test that broke them. A new study from February 2026 tested GPT-5, Grok-4, GPT-4, and Gemini 2.5 Pro on real-world long-context tasks — not synthetic benchmarks, but organic Reddit threads requiring models to find, synthesize, and reason across thousands of posts. The results are uncomfortable. Beyond 5,000 posts (~70K tokens), every model's accuracy dropped to 50–53%. That's coin-flip territory. GPT-5 handles a million tokens on paper. In practice, its useful window is roughly 7% of that.

Precision vs. recall — a critical split. Here's the nuance worth understanding. GPT-5 maintained 95% precision at maximum context length — when it gave an answer, it was almost always correct. But recall collapsed. The model simply stopped finding relevant information buried in long documents, defaulting to silence rather than hallucination. That's a better failure mode than making things up, but it means critical data in your 200-page contract or codebase could go completely unnoticed.

The "lost in the middle" fix — and the new problem. One piece of good news: the classic context rot pattern — where models ignore information in the middle of long inputs — is largely resolved in GPT-5 and Grok-4. They retrieve more uniformly across positions. But solving positional bias didn't fix capacity limits. The bottleneck shifted from where models look to how much they can actually process. A million tokens is a container size, not a comprehension guarantee.

What this means for you. If you're building RAG pipelines, agent workflows, or enterprise tools that depend on long-context performance, these findings are a reality check. Chunking, retrieval strategies, and smart context management still matter — possibly more than ever. The gap between advertised context windows and practical usability isn't a bug. It's the current state of the technology. Plan accordingly.

The Edge

Turn novelty into your competitive advantage: How to create McKinsey-grade industry reports with Kimi K2.5

Go to Kimi and sign up

Select ‘Kimi 2.5 Agent’ as your model and enter your prompt

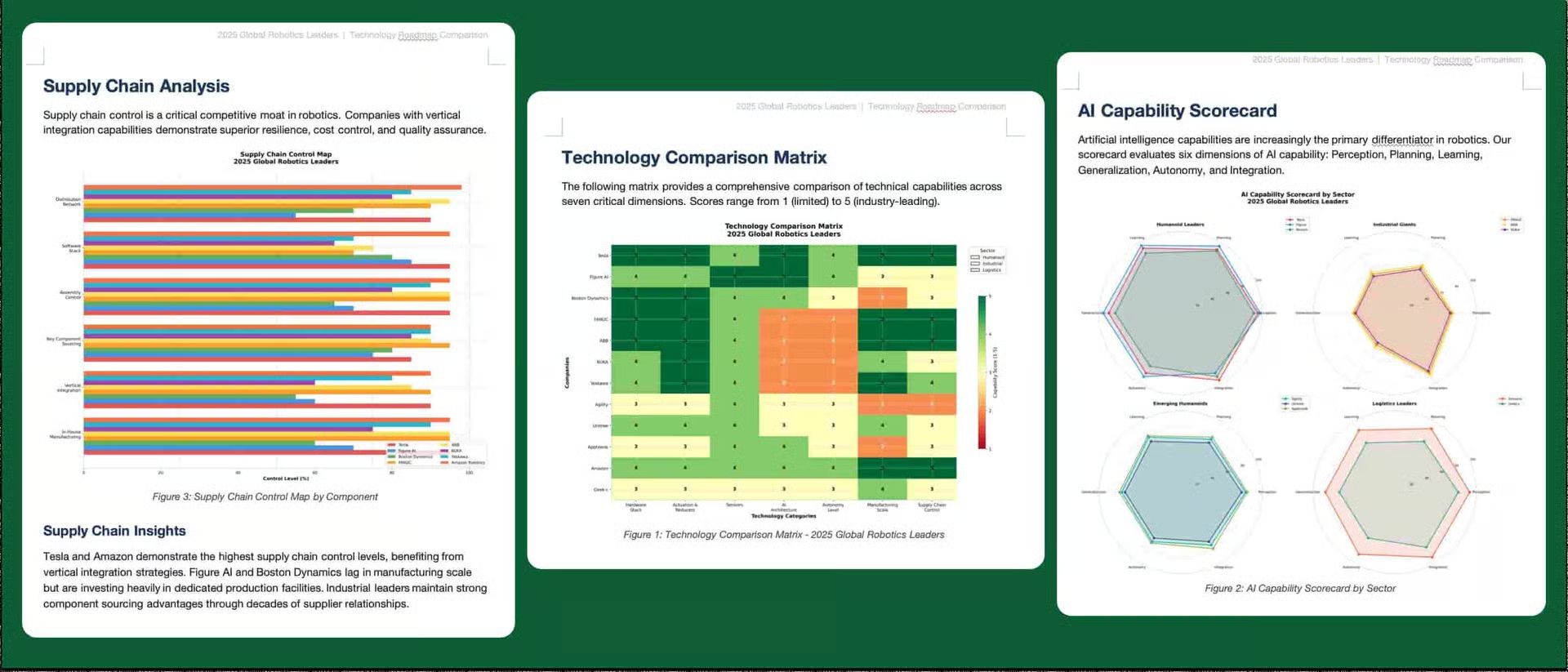

Sample Prompt: Build a “2025 Global Robotics Leaders, Technology Roadmap Comparison” report. Analyze 12 top robotics companies (humanoid, industrial, logistics). Compare: hardware stack, actuation & reducers, sensors, AI architecture (end-to-end vs modular), autonomy level, manufacturing scale, and supply-chain control. Include: Technology Comparison Matrix, AI Capability Scorecard, Supply Chain Map, and a 2x2 Quadrant Chart positioning companies. Insert each company’s official logo in company profiles and in the quadrant visualization. Style: Institutional equity research report with clean tables and strategic graphics.

Within a few minutes, you’ll get a Word file with consulting-level data visualization, technical heat-maps, and strategic frameworks

Productivity

4 Tools Worth Your Time

✨ QuikAuthor: Convert videos, PDFs, and text prompts into interactive, gamified e-learning courses.

📖 AIWriteBook: Write and publish a book on KDP in hours with AI.

📱 AptiBuild: Turn your hidden talents into profitable app ideas in minutes.

💼 Reztune: Instantly rewrite and format your resume for any job.

Ready to Use

High-Converting Landing Page Copy

Prompt: Act as a professional copywriter with expertise in direct response and digital marketing, Write a high converting landing page for a product or service: in a [your niche, e.g., online coaching, SaaS tool, digital templates] industry.

The purpose of the landing page is to [goal - e.g., collect leads, sell a product, book discovery calls).

Include the following sections:

• An attention-grabbing headline

• A compelling sub headline

• A short, engaging hook to introduce the offer

• A bullet list of 4-6 benefits (not features)

• A strong call-to-action section with button text

• A short "About the Creator" bio to build trust

• A section that addresses common objections or hesitations

• Optional: A 2-line customer testimonial

Keep the tone [desired tone: friendly/ professional / bold], and make the writing persuasive, easy to scan, and emotionally compelling.

Use simple language and formatting that converts well on mobile.

Source: Digital Hustle StudioCinematic Motion Photography

NanoBanana Prompt: Cinematic motion-blur photography shot on a Leica SL3 with a 50mm summilux at f/2.8, captured from a chest-level frontal angle using slow shutter panning. Lighting setup: flat overcast daylight acting as soft diffusion, allowing long blur trails without harsh contrast. Color tone: muted concrete greys and pale skin neutrala inspired by A24 films. Subject: a Gen-Z male model standing completely still, sharp eyes, symmetrical face, real skin pores visible, wearing understated fashion. Crowd action: hundreds of commuters rushing past in all directions, bodies stretched into smooth horizontal and diagonal blur. Emotion: quiet rebellion through stillness. Aesthetic: editorial calm vs chaos. Post : clean motion physics, fine grain, no glitches, no face distortion, no warped--ar 4:5 --raw anatomy.Whenever you’re ready to take the next step

AI won't replace you. Someone who knows how to use it will. This free 5-day course teaches you the essentials: effective prompts, automation fundamentals, and when agents beat workflows. Ready to level up?